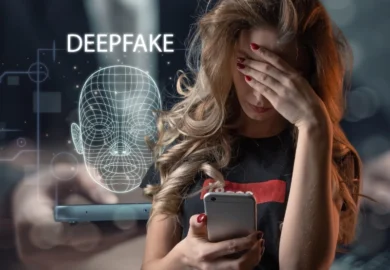

Artificial intelligence has made it possible to create highly realistic images and videos in a matter of minutes. While that technology has legitimate uses, it has also led to a disturbing trend: the rise of AI-generated deepfakes and non-consensual sexual images. For victims of AI-generated deepfakes and non-consensual sexual images, the impact is immediate and personal. Even when the content is fabricated, it can spread quickly, damage reputations, and cause lasting emotional harm. As these cases become more common, an important legal question follows: who can be held responsible?

Table of Contents

The answer depends on the facts, but Illinois law, and existing legal principles, may allow victims to pursue claims against multiple parties.

Key Takeaways

- AI deepfakes and non-consensual sexual images can cause serious emotional, reputational, and professional harm, even when the content is entirely fabricated.

- The person who creates this type of content may be held liable under Illinois law for privacy violations, emotional distress, and related claims.

- Individuals who share or distribute deepfake sexual content can also face legal consequences, especially if they knew or should have known it was harmful.

- In some cases, online platforms, employers, or organizations may have legal exposure depending on their role and response to the content.

- Victims may have the right to pursue financial damages, force removal of the content, and take legal action to prevent further distribution.

- Acting quickly, by preserving evidence and seeking legal guidance, can be critical in protecting your rights.

Understanding Deepfakes and Digital Exploitation

Deepfakes are images, videos, or audio recordings created or altered using artificial intelligence. In many cases, a person’s face or likeness is placed into explicit content, making it appear authentic.

These situations often overlap with broader forms of digital exploitation, including the non-consensual sharing of intimate images. What makes deepfakes particularly harmful is that a victim does not need to have ever created or shared private content in the first place. The material can be entirely fabricated, yet still believable enough to cause real-world consequences.

That distinction is important, but legally, it does not necessarily protect the person responsible.

The Real-World Impact on Victims

People targeted by deepfake sexual content often experience more than embarrassment. The harm can affect nearly every aspect of their lives.

Reputational damage can spread quickly online, especially if the content is shared across multiple platforms. In some cases, victims face harassment, professional setbacks, or strained personal relationships. Emotional distress is also common, particularly when the content continues circulating despite efforts to remove it.

Illinois courts recognize these types of harms in other privacy-related cases, and those same principles are increasingly being applied in cases involving AI-generated content.

Who May Be Held Liable for AI-Generated Deepfakes and Non-Consensual Sexual Images?

Liability in deepfake cases is not always limited to a single person. Depending on how the content was created and shared, several parties may be legally responsible.

The Person Who Created the Content

In most cases, the individual who created the deepfake is the starting point for liability. Using someone’s likeness without consent, especially in a sexual context, can give rise to multiple legal claims.

These may include invasion of privacy, misappropriation of likeness, or intentional infliction of emotional distress. If the content falsely portrays someone in a way that harms their reputation, defamation may also be a factor.

Even though the image or video is not real, the law often focuses on the impact it has on the victim, not just how it was created.

Those Who Share or Distribute It

Liability may also extend beyond the original creator. Anyone who knowingly shares or republishes non-consensual sexual content can contribute to the harm.

In Illinois, the unauthorized dissemination of private sexual images is already addressed under state law. While deepfakes introduce new complexities, distributing this type of material, particularly with knowledge of its nature, can still expose someone to legal consequences.

The broader the distribution, the greater the potential harm, which is something courts may consider when evaluating a claim.

Online Platforms and Websites

Online platforms play a central role in how this content spreads, but holding them accountable can be more complicated.

Many websites rely on federal protections under Section 230 of the Communications Decency Act, which can limit liability for user-generated content. However, those protections are not absolute. If a platform is notified of harmful or unlawful content and fails to act, or if it plays a more active role in promoting that content, legal exposure may increase.

Even when financial liability is limited, platforms may still be compelled to remove harmful material.

AI Developers and Technology Companies

Another emerging question is whether the companies behind AI tools like Grok can be held responsible.

In general, developers are not automatically liable for how users misuse their technology. However, courts are beginning to examine situations where companies may have failed to implement reasonable safeguards or knowingly allowed harmful uses of their platforms.

This area of law is still developing, but it is likely to evolve as AI tools become more powerful and more widely used.

Employers and Organizations

In certain cases, liability may extend into the workplace. If deepfake content is created or shared in a professional setting, or as part of workplace harassment, employers may have legal obligations to respond.

A failure to address known misconduct could expose an organization to claims, particularly if the conduct contributes to a hostile work environment.

Legal Options for Victims in Illinois

Victims of deepfake abuse may have several legal avenues, depending on the circumstances.

Civil claims often focus on privacy violations, emotional distress, and reputational harm. Illinois law also provides protections against the non-consensual distribution of sexual images, which may apply even in cases involving digitally created content.

In addition to seeking financial damages, victims may pursue court orders requiring the removal of the content or preventing further distribution. Acting quickly can be important, especially when the material is spreading online.

Challenges in These Cases

Deepfake cases present unique challenges. Identifying the person responsible is not always straightforward, particularly when content is posted anonymously or hosted on multiple platforms.

There are also legal questions about how existing laws apply to new technology. Courts are still working through these issues, which can make outcomes less predictable than in more established areas of law.

Despite these challenges, legal remedies are increasingly available, and more victims are beginning to take action.

Taking the Next Step

If you discover a deepfake or non-consensual sexual image involving you, it’s important to act promptly. Preserving evidence and reporting the content to the platform are often the first steps, but legal guidance can be critical in understanding your options.

At Ankin Law, our nonconsensual porn injury lawyers recognize how personal and overwhelming these situations can be. Our team approaches every case with discretion and a focus on protecting our clients’ rights and reputations.

If you have questions about a claim, contact Ankin Law for a confidential consultation. We can help you understand the legal landscape and determine the next steps based on your specific situation. Call 312-600-0000.

Frequently Asked Questions

Can I take legal action over a deepfake?

In many situations, yes. If the content causes harm to your reputation, privacy, or emotional well-being, you may have grounds for a claim.

Does Illinois law cover AI-generated sexual images?

While laws are still evolving, existing statutes related to non-consensual sexual images and privacy violations may apply, depending on the facts.

What if the person responsible is anonymous?

An attorney may be able to take steps to identify the individual through legal processes, particularly if the content was posted online.

Can the content be removed?

In many cases, yes. Legal action may help compel platforms or individuals to remove harmful material.